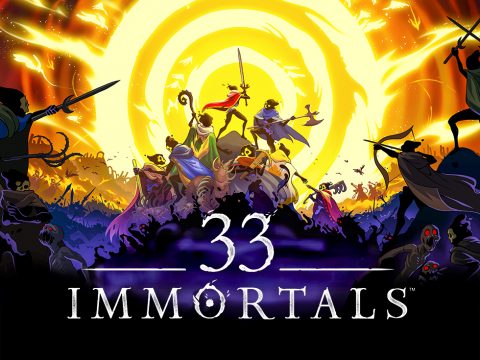

From Student Prototype to Online Chaos: Building Dino Party’s Multiplayer Foundation

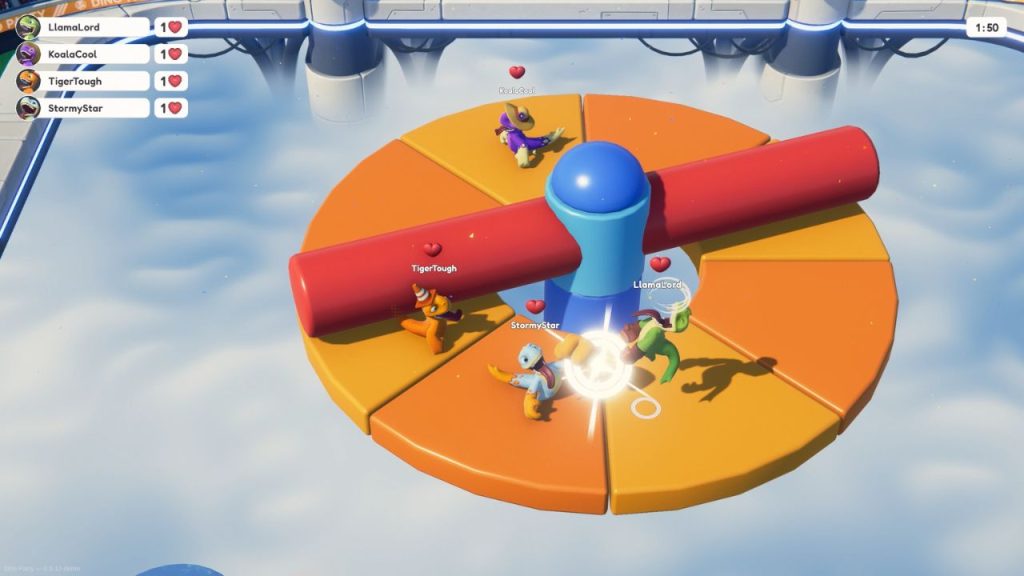

What started as a student project at HTW Berlin turned into something much bigger over the course of five years. Dino Party is the kind of game that thrives on chaos. Flying dinosaurs, collapsing arenas, and unpredictable player interactions. But behind that chaos lies a series of deliberate technical decisions, rewrites, and hard-earned lessons.

For the two-person team at Studio Nachtwerk, the journey wasn’t just about adding features. It was about learning when to simplify, when to rebuild, and when to rely on the right tools. Multiplayer, in particular, forced critical architectural decisions early. Something many teams underestimate when moving from local prototypes to online experiences.

In this interview, the Dino Party team shares how they transitioned from a local multiplayer prototype to a fast-paced online game, how they tackled physics replication and bandwidth constraints, and why choosing the right networking foundation, Photon Fusion, ultimately made all the difference.

Key Takeaways

Start with the right architecture early. Rebuilding a game for multiplayer later is possible but costly. Choosing a networking framework from the beginning can save years of rework and accelerate development.

Focus on the core gameloop before scaling. Early development was slowed by feature creep. Only after intensive playtesting and simplification did the team find the gameplay loop that worked.

Optimize by sending less data, not more. From destructible environments to moving platforms, the team consistently minimized bandwidth by networking only essential state (like a single bit for chunk detachment).

Client-side prediction is essential but not perfect. Instant responsiveness requires prediction, but small input delays (like 50ms) can significantly reduce mispredictions and improve overall consistency.

Leverage existing networking tools to move faster. Using Photon Fusion allowed the team to avoid low-level networking complexity and focus on gameplay, iteration, and polish.

Design transitions and systems with synchronization in mind. Keeping gameplay within a persistent environment (instead of switching scenes) enabled seamless, lightweight state transitions across all clients.

We know Dino Party started as a student project at HTW Berlin and evolved over nearly five years. Transitioning from a local prototype to an online multiplayer game is a massive leap. Can you walk us through that technical evolution? At what point did you realize your initial architecture needed to change to support the ‘gameloop’ you finally settled on?

Our development journey definitely had its share of ups and downs. After two years of development we were still searching for the right core gameloop. Whenever something didn’t quite work, our instinct was often to add more features instead of addressing the underlying design issues.

Things started to change in the third year of development. We began playtesting a lot more frequently and focused on player feedback. We boiled the concept down to its essentials, leaving us with the core gameloop Dino Party is built around today.

At that point the game was still purely local multiplayer, which worked great for testing but quickly became a limitation. With more and more players asking for online multiplayer we started looking for a robust networking solution that could support our fast-paced gameplay. We experimented with several options before ultimately settling for Photon Fusion 2. That decision meant rewriting large parts of the game, but gave us the foundation we needed to support the real-time multiplayer experience the game depends on today.

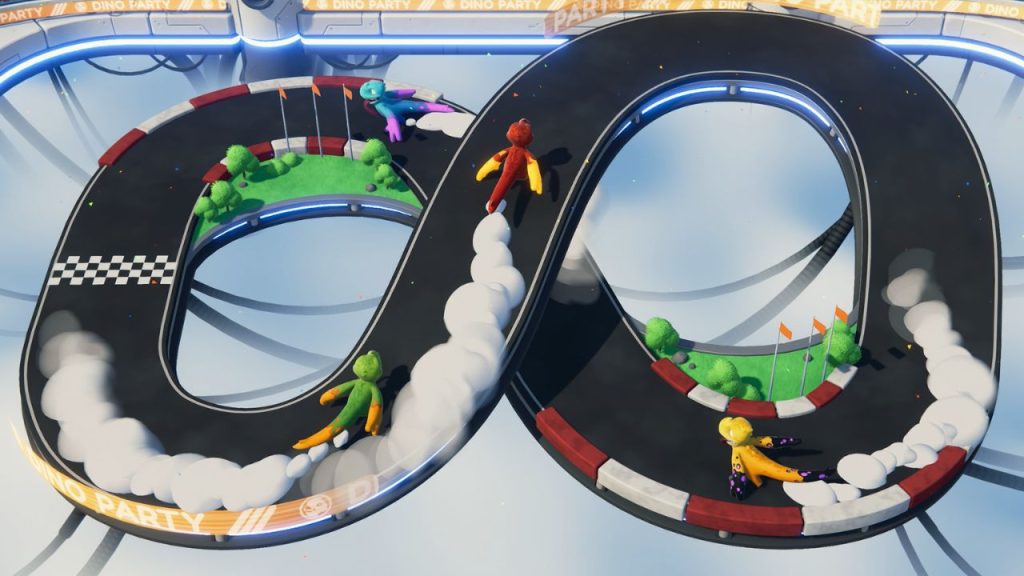

The Steam page highlights ‘silly physics’ and ‘chaos’ as core pillars of the game. Networking physics is notoriously difficult because a slight desync can ruin the experience. How did you approach the replication of rigid bodies and player movement to ensure that when a dino gets punched flying, it looks the same on every client?

Since we have a lot of chunks flying around while destruction takes place, we wanted to avoid resimulating physics. Running the physics simulation multiple times per tick would have severely impacted performance, given the amount of Rigidbodies flying around. Instead, we opted for a much more performant solution: Fusion comes with a state-of-the-art feature called Forecast Physics, which can predict and correct individual Rigidbodies, without having to resimulate the whole local physics simulation. This allowed us to still have many local, non-networked physics objects next to predicted ones, without sacrificing any performance.

Similarly, player movement was quite straightforward to implement, since it uses Fusion’s advanced KCC addon, which handles the basics of player replication and movement. We built custom processors on top of this system, which for example depenetrate players from the animated attack colliders of other players.

You mention that ‘walls, towers, and friendships’ tend to break during matches. Synchronizing destruction in real-time can be a bandwidth hog. Did you have to utilize specific replication algorithms, like switching between eventual and full consistency, to keep the debris synced without killing the network performance?

Each destructible object is pre-fractured into many different chunks. When a dino punches the object, chunks that were hit are marked as detached within a networked array. Clients then locally “detach” these chunks, activating their Rigidbody and applying forces to them. Since detached chunks do not influence player movement or other parts of the gameplay, we do not have to network their positions – the local physics engine takes care of the action for us. In a nutshell, we keep bandwidth and resimulation costs minimal by only networking whether or not a chunk is attached, which results in merely a single bit networked whenever a chunk detaches.

In a brawler, input lag is the enemy. You describe the combat as ‘fast and punchy’, which requires high precision. How did you handle input authority and lag compensation? Specifically, how do you ensure that a shield block or a ground pound registers instantly for the player, even if their ping isn’t perfect?

We are predicting player inputs on clients, which means their local input is immediately used and the effects of that input, such as stunning another player, are immediately visible. In most cases, this is unproblematic. In some scenarios, our local input might have been impossible: The stunned player might have actually shielded before we landed that hit. When predicting player inputs these mispredictions are simply unavoidable, they are a byproduct of executing actions instantly while being connected to other peers with some latency.

What we did to reduce the likelihood of these mispredictions was adding a tiny bit of local input delay (around 50ms), which is practically unnoticeable while playing. By immediately networking inputs while only consuming them after these 50ms, we can compensate for 50ms of round-time-trip latency: Within this timeframe, clients know about the actions of other players before they are actually executed, and thus there are no mispredictions.

One of your key features is the stadium morphing seamlessly between different minigames and maps. From a state-transfer perspective, that sounds like a heavy lift. How do you manage the game state during those transitions to ensure all players load into the new hazards simultaneously without hanging or dropping connection?

A key decision we made early on was that we didn’t want to move players between levels, but rather bring the new environment to the players. All gameplay takes place inside the same persistent stadium. Mingiames take place within the center of this stadium, which we call “stage”. When a round ends, the current stage simply moves down into the clouds. At this point we instruct Fusion to despawn the current stage and spawn the next one. Fusion handles the replication of those prefabs across all clients and keeps the state in sync – every player sees the new stage appear at the same moment. Because scenes are never changed and only a single prefab changes, the transition is extremely lightweight. From the players perspective it just looks like the previous stage collapses into the clouds while a new one arises, while technically it’s just a synchronized prefab swap managed by Fusion.

For a 2-to-4 player party game, choosing the right topology is critical for cost and stability. Did you opt for a Client-Host architecture to keep costs down for your indie team, or did you utilize dedicated server orchestration? What were the trade-offs you considered regarding host migration and security?

Since we are only two people working on the game with practically no budget and no prior experience with developing multiplayer games or server hosting, we opted for the simpler route, where one player acts as the host, eliminating the need for dedicated servers. Dino Party is a casual party game – giving authority to the host player is a trade off that seemed logical for this genre. And if competitive play would ever become a thing, we can always elevate our current architecture by making use of dedicated servers instead of letting one player be the host.

With up to 4 players and 40+ maps, plus the customization options involved, there is a lot of data moving around. Did you encounter bottlenecks with bandwidth early on? We’d love to hear about any specific optimization techniques or interest management strategies you used to keep the packet size down.

By looking at the sample projects and talking to members of the Photon Discord, we tried to adapt best practices early on. One example of bandwidth optimization are our moving platforms: Instead of networking the position of each platform each tick, we just network the tick at which the movement began. Since each client knows about the waypoints of the platform, clients can calculate where the platform should be by extrapolating from the start tick.

By asking yourself what the minimum amount of information is that clients need to accurately replicate something, you can save a lot of bandwidth.

You mentioned that this project was built in your spare time between day jobs. Multiplayer engineering often requires a dedicated team just for netcode. How did you manage to offload that complexity? Did using a third-party state-sync SDK allow you to focus more of your limited time on gameplay polish rather than writing low-level socket code?

Although there was an initial learning curve, Fusion made sense to us very early on. Not having to worry about low-level problems regarding packets, compression and state sync is definitely a big time-saver. Once you get the hang of it, new features are extremely simple to implement – in most cases it comes down to inheriting your component from NetworkBehaviour, adding [Networked] attributes to the variables you want to sync and then handling state changes within callback functions. After working with fusion for almost two years now, it really became second nature.

Looking back at your 5-year journey from a ‘one-year plan’ to a release right around the corner, what is the one piece of technical advice you would give to other indie developers who are terrified of adding multiplayer to their games? What was the technical hurdle you wish you had solved sooner?

If you are considering adding multiplayer to your game, immediately start out with a multiplayer framework, don’t try to build the game first to then add multiplayer on top of it afterwards. This is what we did, and it cost us a lot of time, which we could have used to improve the game instead. While It’s not an impossible task to rewrite a project in later stages of development, you fly a lot better choosing a multiplayer framework and working with it from the get-go.

Conclusion

Dino Party’s journey is a clear example of how multiplayer development is as much about decision-making as it is about engineering. The team didn’t just solve technical challenges, they learned when to rethink their approach entirely.

From rebuilding their architecture to optimizing every bit of network traffic, their path reflects a broader truth: multiplayer is not something you “add later.” It shapes the entire game from the ground up.

And in the end, that shift is exactly what unlocked the game’s full potential. As the team puts it:

“Photon Fusion enabled us to turn Dino Party from a local multiplayer prototype into a fast and responsive online multiplayer game that players can enjoy together anywhere.”

By choosing a solution that handled the complexity of synchronization, prediction, and state management, they were able to focus on what really matters: creating chaotic, fun, and memorable gameplay.

For other indie developers, the takeaway is simple but critical: start with the right foundation, and you’ll spend less time fighting the tech, and more time building the game players actually want to play.